ATTiny85 First Sketch

First successful try with ATTiny85, programmed through an Arduino UNO. Followed instructions found here

First successful try with ATTiny85, programmed through an Arduino UNO. Followed instructions found here

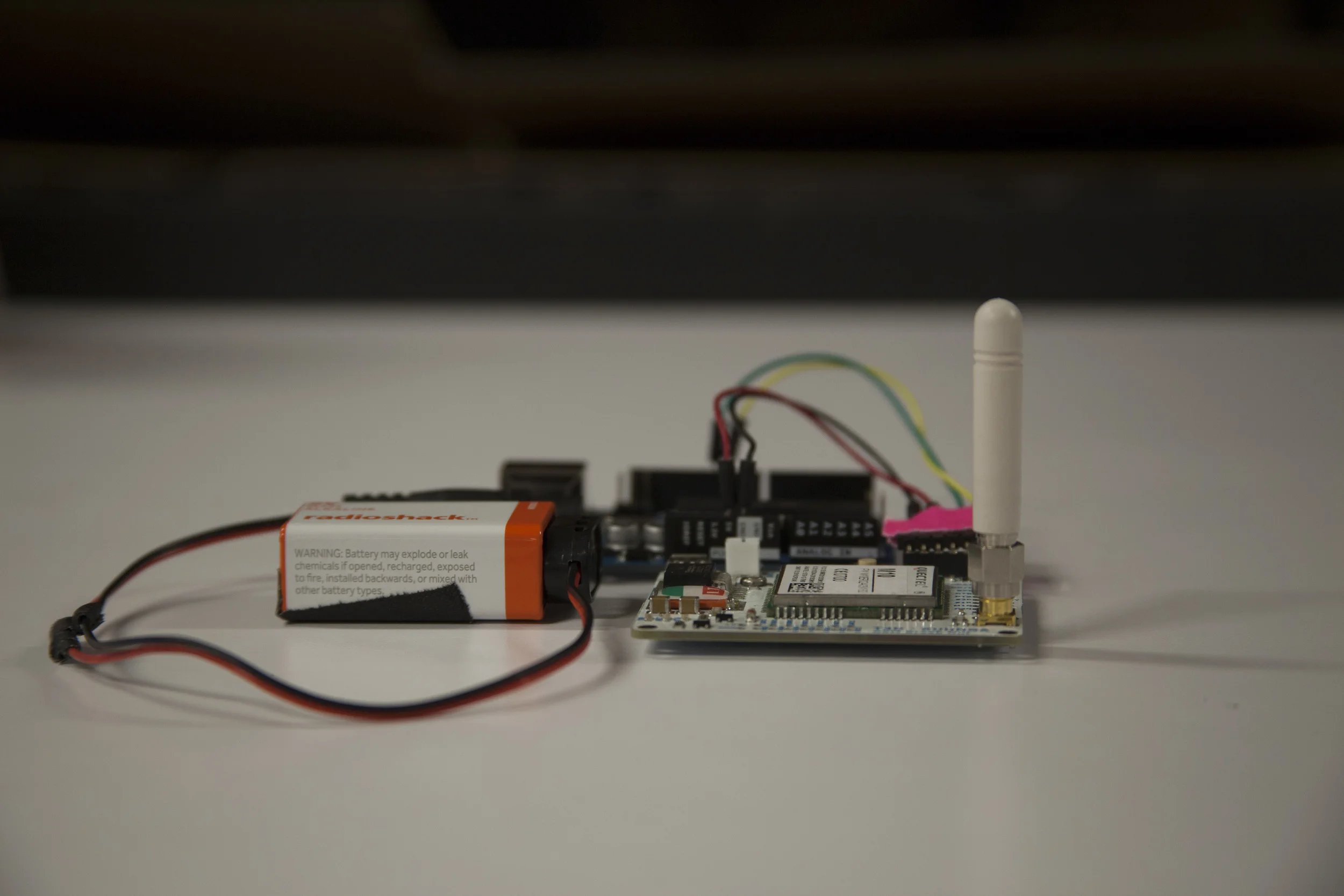

For our GSM class final, partnered with Clara and Karthik we created a Geo-Fence hooked to an Arduino Uno.

If you're receiving garble when reading the incoming data from the GSM through Arduino, you should check whether both boards are working at the same baudrate (9600). To change the GSM board –Quectel 10M– baudrate through an FTDI adaptor and CoolTerm:

Now plug the GSM Board to the chosen Software Serial PINS (10 and 11) and change the Fence center-point (homeLat and homeLon) the size –radius– of the fence in KMs (thresholdDistance) and the phone is going to be controlled from (phoneNumber), UPLOAD and run the SERIAL MONITOR. The code can be found in this Github Repo. Enjoy

The hardware used for this project was an Arduino UNO and one of Benedetta's custom GSM Boards with enabled GPS. This setup could work for potentially stand-alone purposes, however is advised to make sure beforehand that the power source has enough Watts to run the setup for the sought time.

A simple snippet to make an LED light up when receiving a SMS, with one of Bennedetta's GSM Shields

Lighting the LED pin 13 in the Arduino board. After several failed attempts of writing from Arduino Serial Monitor, we decided to do it through Coolterm.

This is the code:

#include <SoftwareSerial.h>

SoftwareSerial mySerial(2, 3);

char inChar = 0;

char message[] = "que pasa HUMBA!";

void setup()

{

Serial.begin(9600);

Serial.println("Hello Debug Terminal!");

// set the data rate for the SoftwareSerial port

mySerial.begin(9600);

pinMode(13, OUTPUT);

//

// //Turn off echo from GSM

// mySerial.print("ATE0");

// mySerial.print("\r");

// delay(300);

//

// //Set the module to text mode

// mySerial.print("AT+CMGF=1");

// mySerial.print("\r");

// delay(500);

//

// //Send the following SMS to the following phone number

// mySerial.write("AT+CMGS=\"");

// // CHANGE THIS NUMBER! CHANGE THIS NUMBER! CHANGE THIS NUMBER!

// // 129 for domestic #s, 145 if with + in front of #

// mySerial.write("6313180614\",129");

// mySerial.write("\r");

// delay(300);

// // TYPE THE BODY OF THE TEXT HERE! 160 CHAR MAX!

// mySerial.write(message);

// // Special character to tell the module to send the message

// mySerial.write(0x1A);

// delay(500);

}

void loop() // run over and over

{

if (mySerial.available()){

inChar = mySerial.read();

Serial.write(inChar);

digitalWrite(13, HIGH);

delay(20);

digitalWrite(13, LOW);

}

if (Serial.available()>0){

mySerial.write(Serial.read());

}

}

Time's running out! Will your Concentration drive the Needle fast enough? Through the EEG consumer electronic Mindwave, visualize how your concentration level drives the speed of the Needle's arm and pops the balloon, maybe!

Second UI Exploration

I designed, coded and fabricated the entire experience as an excuse to explore how people approach interfaces for the first time and imagine how things could or should be used.

The current UI focuses on the experience's challenge: 5 seconds to pop the balloon. The previous UI focused more on visually communicating the concentration signal (from now on called ATTENTION SIGNAL)

This is why there's prominence on the timer's dimension, location and color. The timer is bigger than the Attention signal and The Needle's digital representation. In addition this is why the timer is positioned at the left so people will read it first. Even though Attention signal is visually represented the concurrent question that emerged in NYC Media Lab's "The Future of Interfaces" and ITP's "Winter Show" was: what should I think of?

What drives the needle is the intensity of the concentration or overall electrical brain activity, which can be achieved through different ways, such as solving basic math problems for example –a recurrent successful on-site exercise–. More importantly, this question might be pointing to an underlying lack of feedback from the physical devise itself, a more revealing question would be: How could feedback in BCIs be better? Another reflection upon this interactive experience was, what would happen if this playful challenge was addressed differently by moving The Needle only when exceeding a certain Attention threshold?

This installation pursues playful collaboration. By placing the modules through arbitrary configurations the idea behind this collective experience is to create scenarios where people can collaboratively create infinite layouts that generate perceivable chain reactions. The way to trigger the installation is through a playful gesture similar to bocce where spheres can ignite the layout anywhere in the installation.

After an apparent success –context-specific– and consequent failure –altered context– the project turned onto a functional alternative. The next process better illustrates it.

These images show the initial thought out circuit that included a working sound triggered by a –static– threshold. We also experimented with Adafruit's Trinket aiming towards circuit simplification, cost-effectiveness and miniaturization. This shrunk micro controller is composed by an ATTiny85 nicely breadboarded and boot-loaded. In the beginning we were able to upload digital output sequences to drive the speaker and LED included in the circuit design. However, the main blockage we manage to overcome in the end was reading the analog input signal used by the microphone. The last image illustrates the incorporation of a speaker amplifier to improve the speaker's output.

1. the functional prototype that includes a hearing spectrum –if the microphone senses a value greater than the set threshold, stop hearing for a determined time–

2. the difference between a normal speaker output signal and an amplified speaker output signal.

After the first full-on tryout, it was clear that a dynamic threshold –the value that sets the trigger adapts accordingly to its ambient–. The microphone however, broke one day before the deadline, so we never got to try this tentative solution –even though there's an initial code–.

Plan B, use the Call-To-InterAction event instead. In other words, use collision and the vibration it generates to trigger the modules through a piezo. Here's the code.

A couple videos that illustrate the colliding key moments that trigger the beginning of a thrilling pursue.

And because sometimes, plan-b also glitches... Special thanks to Catherine, Suprit and Rubin for play testing

This is the basic behavior of the system, where sound is the medium of communication. The Trigger is the element that initiates the chain reaction. It will translate the rolling motion into the sound that will trigger the modules laying in the ground. This event will start the chained reaction.

This is our initial Time Table and we've divided the overall project's development in two main blocks, the trigger element –stands for [T] in the timeline– and the module –stands for [M]–. Follow this link for a detailed description on each of the activities involved in our Time Table.

This Bill of Materials is thought for an initial prototype of one Trigger and two Modules. There's still a lot to figure out, but so far this is how it looks. You can follow this link for future references.

When studying Interactive Technologies at NYU’s Interactive Telecommunications Program, I was working towards an interactive installation that blended the bowling gesture to trigger scattered half-spheres to generate a musical experience. This is an evolved and collaborative idea, from the Generative Sculptural Synth. The ideal concept is an interactive synthesizer that's made up of replicated modules that generate sound. It is triggered by sphere that creates chain-reaction throughout the installation's configuration.

Methodology: Iterative Concept and Prototyping

Tools: Arduino and Little Bits

Deliverables: Prototype of a modules that communicate and trigger through motion and sound range

It started out as re-configurable soundscape and evolve into an interactive –bocce-like– generative instrument. Here's a inside scoop of the brainstorming session were we –with my teammate– sought common ground. (1. Roy's ideal pursuit 2.My ideal pursuit 3.Converged ideal)

It started out as re-configurable soundscape and evolve into an interactive –bocce-like– generative instrument. Here's a inside scoop of the brainstorming session were we –with my teammate– sought common ground. (1. Roy's ideal pursuit 2.My ideal pursuit 3.Converged ideal)

After the slum dunk failure of the DIY Audio Input, I realize the convenience –limited– of prototyping with Littlebits. This way, I could start concentrating in the trigger event, rather than getting stuck at circuit sketching. I was able to program a simple timer for module to "hear" –boolean triggered by the microphone– and a timer for the module to "speak" –boolean to generate a tone–. What I learnt about the limitations of the Littlebit sensor is a twofold. They have a Sound Trigger and a conventional Microphone. Both bits' circuits have the embedded circuit solved out which turned out to be useful but limiting. The Sound Trigger has an adjustable Gain, an embedded –uncontrollable– 2 second timer and a pseudo-boolean output signal. So even though you can adjust it's sensibility, you can't actually work around with its values in Arduino IDE. The Microphone bit had an offsetted (±515 serial value) but its gain was rather insensible.

This is why, when conveniently using the Sound Triggers, the pitch is proportional to the distance. In other words, the modules are triggered closer when lower pitches are sensed and vice versa. However, since these bits –Sound Trigger– are pseudo-boolean, there can't be a Frequency Analysis.

This is a followup in the Generative Propagation concept. What I intended to answer with these exercises are two questions:

The trigger threshold can be manipulated through manually controlling the microphone’s gain or amount voltage transferred to the amplifier –Potentiometer to IC–.

By manipulating this potentiometer, the sensitivity of the microphone can be controlled.

The tempo can be established through timing the trigger’s availability. By setting a timer that allows the a variable to listen again, the speed/rate at which the entire installation reproduces sounds can be established.

Can unpredictable melodies be created out of Constellaction’s concept?

Composition

Modules will bridge through consecutive emissions and receptions of sound. In the end, the purpose is to create a a cyclic chain that sets the stage for a greater pursue: creating a generative audio experience –like a tangible tone matrix–. In this exercise I will explore simple initial attributes such as trigger-thresholdand tempo.

Concept

How can sound-modules resemble basslines through replication? For the first phase of this project, I will explore ways of creating a module that, triggered by a sound, generate auditive-chain reactions.

Context

The general idea is to create different behaviors with these modules to the extent that they become generative. In this particular exercise –Mid-Term–, the idea is to create looped compositions that resemble bassline. By scaling these modules, emergent and unpredictable scenarios can appear.

BOM (Bill Of Materials)

Mind the Needle is project exploring the commercially emergent user interfaces of EEG devices. After establishing the goal as popping a balloon with your mind –mapping the attention signal to a servo with an arm that holds a needle–, the project focused on better understanding how people approach these new interfaces and how can we start creating better practices around BCIs –Brain Computer Interfaces–. Mind the Needle has come to fruition after considering different scenarios. It focuses on finding the best way communicating progression through the attention signal. In the end we decided to only portray forward movement even though the attention signal varies constantly. In other words, the amount of Attention only affects the speed of the arm moving, not its actual position. Again, this is why the arm can only move forward, to better communicate progression in such intangible, rather ambiguous interactions –such as Brain Wave Signals–, which in the end mitigate frustration.

The first chosen layout was two arcs the same size, splitting the screen in two. The arc on the left is the user's Attention feedback and the other arc is the digital representation of the arm.

After the first draft, and a couple of feedback from people experimenting with just the Graphical User Interface, it was clear the need for the entire setup. However, after some first tryouts with the servo, there were really important insights around the GUI. Even though the visual language –Perceptual Aesthetic– used did convey progression and forwardness, the signs behind it remained unclear. People were still expecting the servo to move accordingly with the Attention signal. This is why in the final GUI this signal resembles a velocimeter.

Physical Prototype

Sidenote: To ensure the successful popping-strike at the end, the servo should make a quick slash in the end (if θ ≧ 180º) – {θ = 170º; delay(10); θ = 178;}

Images taken from their Blog Post

After a lot of searching and looking around, I stumble upon a company that creates interactive products for commercial scenarios beyond tactile interfaces onto tangible ones. The interactive product is triggered by lifting one of the products sold in the store, to expose an album of first-person stories around diverse brand’s products. Even though it sets an innovative consumer experience, after half an hour of waiting for someone to comply, I finally decided to take it for a spin. The product is a sealed black box, with what I imagine is a projector, a computer and a camera. The main idea behind it is to transform any surface into an interactive tangible user interface. Basically this is a usable interactive experience with catchy stories behind a tracking framework.

The fact this product is interfacing with real tangible artifacts does set an entire realm of possibilities, even though it was only used for triggering a strictly tactile command interface. This tactile-2D-interface had the proper affordances to easily manipulate the experience. Its results could easily be noticed when navigating and selecting different features, and because it was built on top of the tactile interface paradigm, it was really easy to learn how to use it. However, it lacked the first principle of interaction design, it wasn’t perceivable as an interactive display at first sight. Not really sure why, but its call to action –or its lack of– left clients adrift. Even still when the product had a blinking text prompt of 1/10 of the display’s height –more less– for inviting people to interact –"Please lift to read the stories"–, the overall idea of how to start the interactive experience wasn’t overly persuasive. Maybe, given to the fact that it resembled a light-display-installation that you’re not supposed to touch kind-of imaginary scenario, but not 100% certain. Overall the 5 minute experience was entertaining.

The hypothesis I had before approaching the product was that this interface should aim for what Norman calls affective approach, considering the context and goal are for retail purposes, it is not a scenario that requires a serious concentrated effort reach its goal. In these order of ideas, the product balances beauty and usability fairly well, where easy-going use and contemplation are conveyed.

After tinkering a conventional servo to read its position data, I'm still figuring out a way to apply this feedback reading into an aesthetic application. Even though I'm unsure on how can I implement this into the former concept, it certainly sparks interesting interactive possibilities. The code can be found in this Github Repo.

I also started a sketch around a servo triggered by a digital input. When triggered, the servo moves across a 30º range, back and forth. The idea to explore further, is to module its speed by an analog input, and maybe add a noisy (perlin most likely) effect.

I've integrated an exercise from Automata into the second lab exercises (Digital and Analog Input). Here, I've hacked a servo (connected a cable to its potentiometer) to retrieve its spinning position. The excerpted code from Automata's class, allows to record its movement and reproduce it.

The entire project with instructions can be found in Adafruit's tutorial

Circuit's draw, excerpted from Adafruit's tutorial

“The answers should be given by the design, without any need for words or symbols, certainly without any need for trial and error.” Don Norman

The answers Don Norman addresses are PERCEIVED through affordances. As he describes it, these affordances are “primarily those fundamental properties that determine just how the thing could possibly be used [, and] provide strong clues to the operations of things”. Thus, affordances allow the transition from the first principle to the second (Perceivability to PREDICTABILITY). It’s thanks to these visible assets in products –affordances–, that people are able to interact (operate and manipulate). Given to FEEDBACK (the third principle) people can understand and know how to overcome error (machine’s) and mistakes (people’s). Through repeated interaction, people get to LEARN how to use a product, and thanks to CONSISTENT standard practices among similar products, transfer its usage from one type of product to another.

Besides use standards and best practices, Don Norman addresses the importance of affection in the design process. He points the nuances between negative and positive affection, and draws the importance of creating good human-centered design whenever addressing stressful situations. In the end, he emphasizes that “[t]rue beauty in a product has to be more than skin deep, more than a façade. To be truly beautiful, wondrous, and pleasurable, the product has to fulfill a useful function, work well, and be usable and understandable.”

I like to think of Interaction Design as the work towards creating models/experiences that attempt to closely represent people's imagination or conceptual models. Chris Crawford’s metaphor of conversation is the most concise and enlightening explanation I’ve read so far. Luminaries within the Interaction Design realm such as Bill Moggridge or Gillian Crampton have wonderful explanations, yet Crawford’s self contained metaphor gives IxD’s explanation an elegant simplicity with just one word. From the implications of conversation that Crawford describes, there are a couple concepts to highlight. The cyclic nature of the conversation between actors, and fun as key qualitative factor for high interactive designs. To guarantee this cycle, he addresses the importance of the 3 equally necessary factors –listen, think and speak– to consider a conversation good. This is certainly an entertaining challenge when designing interactive works.

Crawford goes on pinpointing the revealing differences between IxD and other similar disciplines such as Interface Design. This difference relies specifically in the in between factor of a conversation, thinking. Interaction Design differs from Interface Design by addressing how will the work behave, through algorithms. He ensembles an articulate comparison that sets the stage for an afterthought analogy, Interaction Design is to Interface Design as Industrial Design to Graphic Design. He describes that, “[...] the user interface designer considers form only and does not intrude into function, but the interactivity designer considers both form and function in creating a unified design.” A systemic approach that never gets easy, yet enormously fulfilling whenever “people identify more closely with it [interactive work] because they are emotionally right in the middle of it.” In other words, interaction design is amazing thanks to the engaging and earnest-provoking experience.

In the end, Crawford finishes with a cautious call for action encouraging the reader to “exploit interactivity to its fullest and not dilute it with secondary business.” Exactly what prodigious creator and visionary Bret Victor denounces about nowadays consumer tech panorama. He is alarmed by the status quo’s acceptance of the narrow vision in interaction’s future-concept behind a flat surface. Victor advocates for tools that “addresses human needs by amplifying human capabilities”. Its through everyday objects’ properties how Interaction Design feedback should be crafted. He wittily highlights haptic feedback and explains haptic typology –power, precision and hook grips–. These premises will allow Interaction Design craft more intuitive works where hopefully people can seamlessly converse with –fingers crossed– other people and seamlessly experience works and devices. Victor wraps it with an encouraging suggestion to “be inspired by the untapped potential of human capabilities” and as Interaction Design “[w]ith an entire body at your command, do you seriously think the Future Of Interaction should be a single finger?”

Even though gestured Natural Interfaces cast an interesting future for Interaction such as Disney Research's lovely concept, there is still fine tuning within the Beneficial Aesthetic realm.

Aireal: Interactive Tactile Experiences in Free Air. (n.d.). Retrieved September 9, 2014.

Crawford, C. (2002). The Art of Interactive Design a Euphonious and Illuminating Guide to Building Successful Software. San Francisco: No Starch Press.

Victor, B. (2011, November 8). A Brief Rant on the Future of Interaction Design. Retrieved September 8, 2014.

For children's month, we created a giant box to make a stronger bond between children and their parents. I was the Interactive Lead for this project making sure the hardware and software would run swiftly for a month and a half.

Methodology: Iterative Development

Tools: Arduino, OpenFrameworks

Deliverables: Interactive Experience triggered by levers and buttons that took children and parents through a journey

With a collaborative experience, people embarked in a journey in the world of dreams and imagination. To communicate children's boundless imagination and appropriation of everyday objects, we constructed a giant carton box as the ship, with two control panels were knobs and buttons are made out of plastic bottles and other every day objects.

Along with two Interaction Designers, we coded the project's software in OpenFrameworks and the hardware in Arduino. To ensure collaboration in the box's experience, both panels were made wide enough so they could only be triggered by at least two people. There are two starting knobs and two launching/landing levers. The other panel is as wide as the first one, and it has four buttons that light-up to a sequence. Lit buttons have to be pressed at the same time to defeat the violent thread in the journey.