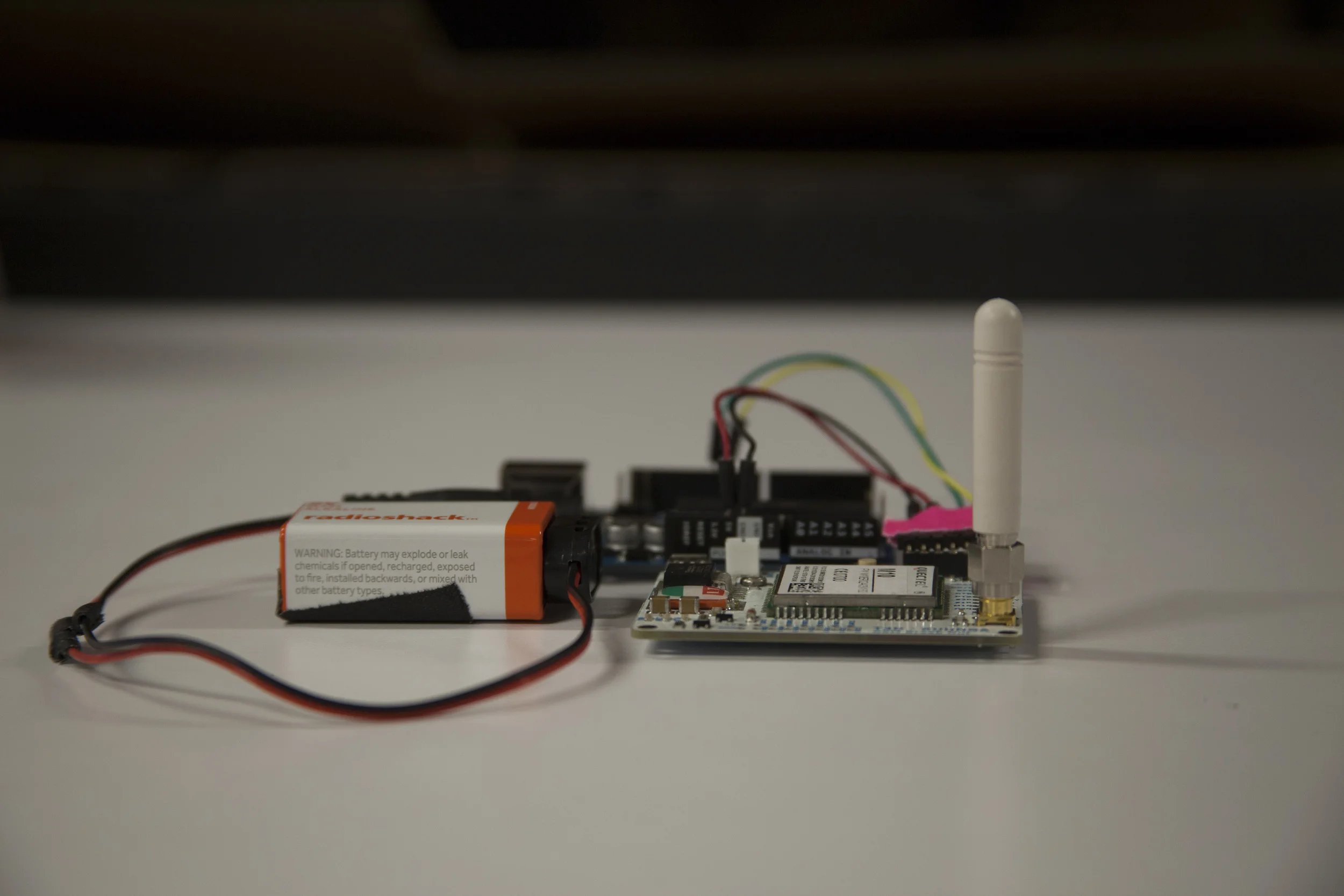

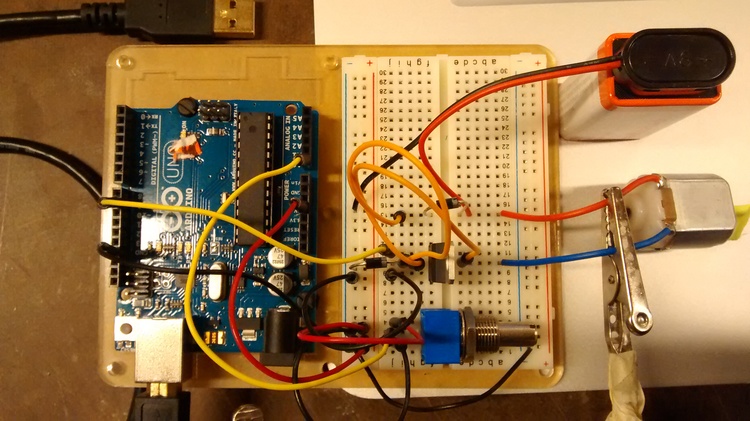

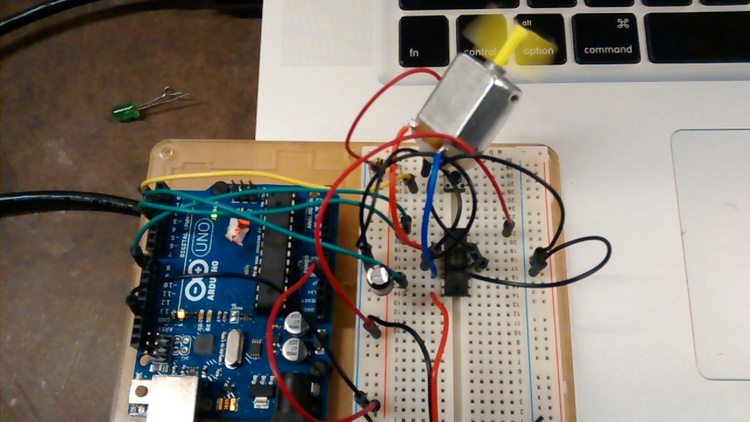

GPS through Arduino and B_GSM Boards

For our GSM class final, partnered with Clara and Karthik we created a Geo-Fence hooked to an Arduino Uno.

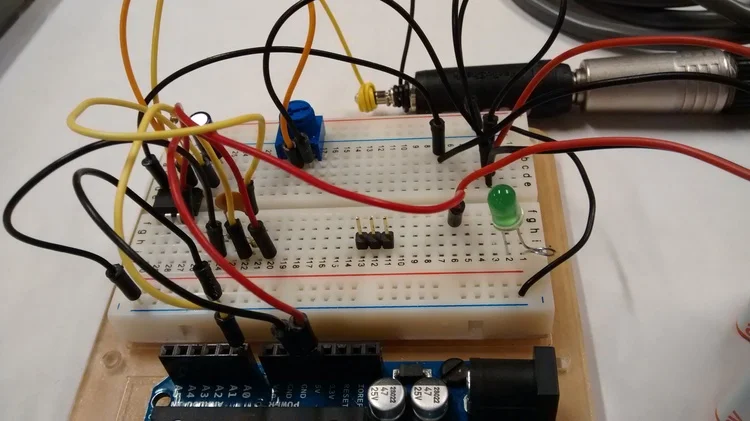

If you're receiving garble when reading the incoming data from the GSM through Arduino, you should check whether both boards are working at the same baudrate (9600). To change the GSM board –Quectel 10M– baudrate through an FTDI adaptor and CoolTerm:

- AT+IPR? //Response (most likely) 115200

- AT+IPR = 9600 //Response OK

- —[Disconnect] change Baudrate to 9600 [Connect]—

- AT&W //Save settings. Response OK

Now plug the GSM Board to the chosen Software Serial PINS (10 and 11) and change the Fence center-point (homeLat and homeLon) the size –radius– of the fence in KMs (thresholdDistance) and the phone is going to be controlled from (phoneNumber), UPLOAD and run the SERIAL MONITOR. The code can be found in this Github Repo. Enjoy

The hardware used for this project was an Arduino UNO and one of Benedetta's custom GSM Boards with enabled GPS. This setup could work for potentially stand-alone purposes, however is advised to make sure beforehand that the power source has enough Watts to run the setup for the sought time.